New Evidence Shows Search Engines Reinforce Social Stereotypes

In April, an MBA student named Bonnie Kamona, reported that a Google image search for “unprofessional hair for work” produced a set of images that almost exclusively depicted women of color. In contrast, her search for “professional hair” delivered images of white women. Two months later, Twitter user Ali Kabir’s report on an image search for “three black teenagers” resulted in a good deal of mug shots, while “three white teenagers” retrieved images of young people having fun.

These stories are consistent with a growing body of research on search engine bias.

A good example is the work of Matthew Kay, Cynthia Matuszek and Sean Munson, in which they considered Google image searches for queries surrounding professions. They compared the observed gender distributions in images concerning a given profession with those documented in U.S. labor statistics. For instance, image results depicting “doctors” retrieved significantly more images of men than would be expected given the number of men who actually work in the profession. The reverse was true for “nurses.” In other words, the researchers showed that image search engines exaggerate gender stereotypes concerning professions.

In a project with my colleagues from the University of Sheffield’s Information School, Jo Bates and Paul Clough, we have been conducting experiments to gauge whether image search engines perpetuate more generalized gender stereotypes.

Here’s the background. On any given day while out in the world, we must interact with people from many walks of life; therefore, we must make judgments about others quickly and accurately. Researchers in the field of “person perception” categorize these judgments along two dimensions: “warmth” and “agency.” Warm people are seen as having pro-social intentions and do not threaten us, although warm traits can be either positive or negative; for example, adjectives such as “emotional,” “kind,” “insecure,” or “understanding” would all count as denoting “warmth.” Agentic people are those who have the competency to carry out their goals and desires and are ascribed traits such as “intelligent,” “rational” and “assertive.”

Psychologists believe that social stereotypes are essentially built from combinations of these two dimensions. Prevailing gender stereotypes hold that women are expected to be high warmth and low agency. On the contrary, men are characterized by agency but not warmth. Individuals who don’t fit these stereotypes suffer social and economic backlash from their peers. For example, in organizational contexts, agentic women and warm men are often passed over for leadership positions.

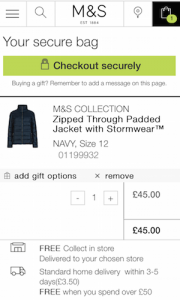

With this framework in mind, we carried out experiments on the Bing search engine, in order to see if the gender distributions of images depicting warm versus agentic people would perpetuate the prevailing gender stereotypes. We submitted queries based on 68 character traits (e.g., “emotional person,” “intelligent person”) to Bing, and we analyzed the first 1,000 images per query. Because search engine results are typically personalized, and because a user’s location is known to be a key characteristic used in personalization, we collected results from four large, Anglophone regions (US, UK, India, and South Africa) by running our queries through the relevant regional servers. We then clustered the character traits based on the proportion of images retrieved that depicted only women, only men, or a mix, in order to see if warm character traits would primarily be depicted by images of women, and agentic character traits by images of men.

Our results largely confirmed that image search results perpetuate gender stereotypes. While there were a few warm character traits that returned a gender-balanced set of images (e.g., “caring,” “communicative”), that was only true of traits that had positive connotations, and are arguably traits that, while “warm,” actually help us achieve our goals. On the other hand, warm traits with negative connotations (e.g., “shy,” “insecure”) were always more likely to retrieve images of women. In addition, while the four regional servers of Bing do not serve up the same images in response to a given query (average overlap between any two regions was around half), the patterns of gender distributions in the images retrieved for warm versus agentic traits was quite consistent. In other words, gender biases in image search results were not restricted to a particular regional market.

Having documented the gender biases inherent in image search algorithms, what can we do? We hope that our work inspires search developers to think critically about each stage of the engineering process and how and why biases could enter them. We also believe that developers using APIs like Bing — which we used to conduct our research — need to be aware that any existing biases will be carried downstream into their own creations. We also believe that it is possible to develop automated bias detection methods, such as using image recognition techniques to check who is – and who is not – represented in a set of results. Search engine companies like Google and Microsoft should prioritize this work, as should journalists, researchers, and activists.

Finally, users can benefit from being more “bias-aware” in their interactions with search algorithms. We are currently experimenting with ways to trigger user awareness during interactions with search services. Users need to be made aware not just of potential problems with algorithmic results, but also with some idea of how these algorithms work.

This is easier said than done. Decades of research has shown that users interacting with a computer system tend to approach it as they would any human actor. This means people apply social expectations to a system like Google. Google image search, of course, does not have a human in the loop, choosing relevant images in response to a query, and who would recognize the need to present results that are not blatantly stereotypical. A modern search engine’s decisions are driven by a complex set of algorithms that have been developed via machine learning. The user knows this; however, upon observing unexpected or offensive results, social expectations drive her to question if the underlying algorithms might be “racist” or “sexist.”

But our instinct to endow these systems with agency, to assume they are like us, may hinder our ability to change them. Like us, algorithms can be biased. The reasons can be complicated — bad or biased training data, reliance on proxies, biased user feedback — and the ways to address them may look little like the ways we address the biases in ourselves.

(42)