April 07, 2022

Google is releasing a new feature for its search engine that tries to mimic how we inquire about things in the real world.

Instead of just typing into a search box, you can now present an image with Google Lens, then tailor the results with follow-up questions. You might, for instance, submit a picture of a dress, then ask to see the same style in different colors or skirt lengths. Or, if you spot a pattern you like on a shirt, you can ask to see that same pattern on other items, such as drapes or ties. The feature, called “Multisearch,” is now rolling out to Google’s iOS and Android apps, though it doesn’t yet incorporate the “MUM” algorithm that Google demonstrated last fall as a way to transform search results.

Google Director of Search Lou Wang says that Multisearch takes after the way we ask questions about things we’re looking at, and is an important part of how Google views the future of search. It may also help Google maintain an edge against a wave of more privacy-centric search engines, all of which remain focused on text-based queries. (It’s also reminiscent of a four-year-old Pinterest feature that lets users search for clothing based on photos of their wardrobe.)

“A lot of folks think search was kind of done, and all the cool, innovative stuff was done in the early days,” Wang says. “More and more, we’re coming to the realization that that couldn’t be further from the truth.”

Adding text to image searches

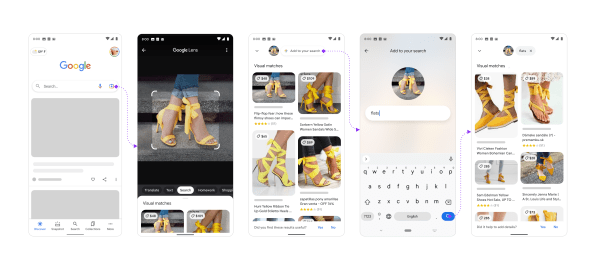

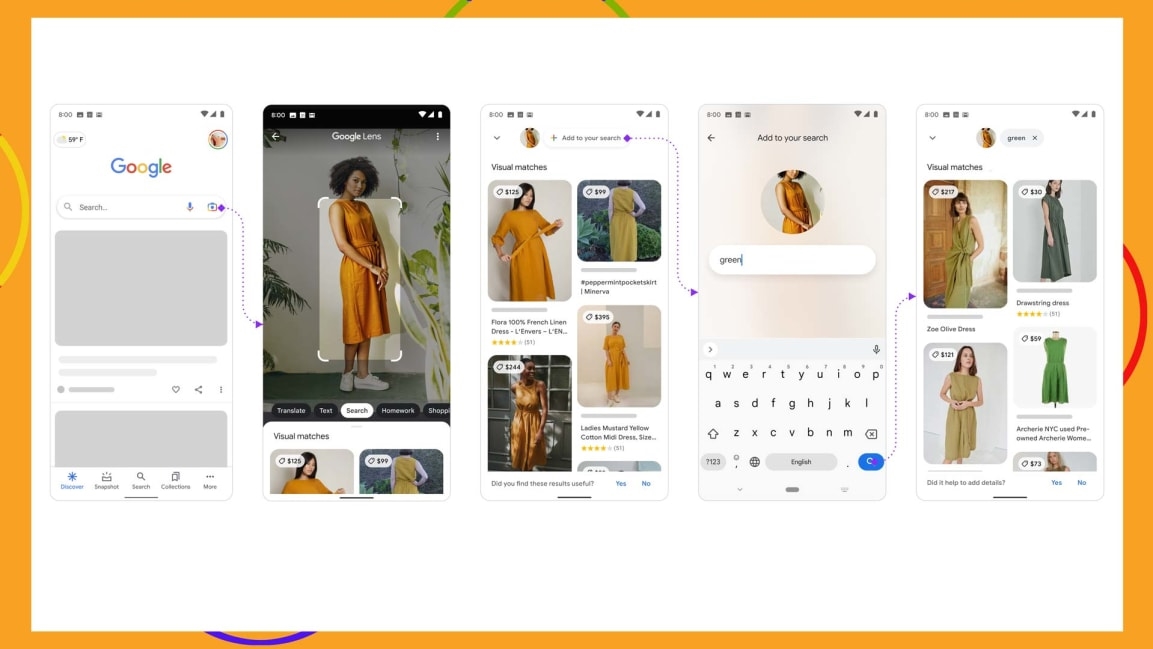

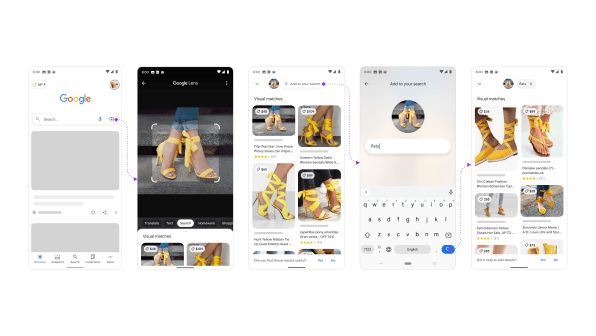

To use the new Multisearch feature, you must open the Google app on your phone, then tap the camera icon on the right side of the search bar to bring up Google Lens. From here, you can use your camera’s viewfinder to identify an object in the physical world or select an existing image from your camera roll.

Once you’ve identified an object, swipe up to show visual matches, then tap the “Add to your search” button at the top of the screen. This brings up a text box to narrow down the results.

While Google Lens has been available since 2017, the ability to filter your search with text is new. It involves not just matching an image with similar ones, but understanding the properties of those images so that users can ask more questions about what they’re seeing. As you might expect, enabling that involves a lot of computer vision, language learning, and machine learning techniques.

“People want to show you a picture, and then they want to tell you something, or they want to ask a follow-up based off of that. This is what Multisearch enables,” Wang says.

For now, Google says the feature works best with shopping-related queries, which is what a lot of people are using Google Lens for to begin with. For instance, the company demonstrated a visual search for a pair of yellow high heels with a ribbon around the ankle, then added the word “flat” to find a similar design without heels. Another example could involve taking a picture of a dining table and searching for coffee tables that match.

But Belinda Zeng, a Google Search product manager, says Multisearch could also be useful in other areas. She gives the example of finding a nail pattern on Instagram and searching for tutorials, or photographing a plant whose name you don’t remember and looking up care instructions.

“We definitely think of this as a powerful way to search beyond just shopping,” she says.

Beyond keyword search

Making Multisearch a natural part of people’s search regimen will have its challenges.

For one thing, Google Lens isn’t available in web browsers, though Zeng says Google is looking into browser support. Even in the Google mobile app, Lens is easy to miss, and having to swipe up on image results and tap another button isn’t the most intuitive process.

Wang says Google has “lots of different ideas and explorations” for how it might make Lens more prominent, though he didn’t get into specifics. He did note, however, that Google now fields more than 1 billion image queries per month.

“Even now, people are just waking up to the fact that Google can search through pictures or your camera,” he says.

The bigger challenge will be answer those visual queries competently enough that Multisearch doesn’t just feel like a gimmick. One use case Google is interested in is helping people make repairs around the house, but identifying the myriad appliances and components involved—then finding relevant instructions for fixing them—is still a hard problem to solve.

Ultimately, though, Google is hoping to change users’ perception of search so it’s not strictly text-based. Doing so would give the company more of an advantage against alternative, privacy-centric search engines such as DuckDuckGo, Brave Search, Neeva, Startpage, and You.com.

Wang says Google isn’t thinking about Multisearch in terms of competition with other search engines. Still, the stale state of text-based searches may help explain why newer upstarts think they have a chance against Google in the first place. For Google, leaning into computer vision and machine learning to fundamentally change the nature of search may help, whether it’s worried about alternative search engines or not.

“Over time, as Google becomes better and better at understanding images and combining these things to qualify search,” Wang says, “it’s going to be more naturally a part of how people think about search.”

(15)