We live in a world where quality matters. Every day, we subconsciously evaluate every transaction that we make, and it is not hyperbole to state that the quality of both the action and the product that it relates to can have a significant impact on our lives. And while the average person might not consider all the ramifications of poor quality, the plain truth is that this can be a barometer for brand reputation and consumer trust.

In a physical sense, we can always see how quality plays an important role in business optimization strategies. Search for “product recall” in any chosen manufacturing sector and you will get hundreds of thousands of results in seconds. On a personal level, a badly made cup of coffee or poor customer experience in a retail location may make us think twice about a second visit.

But how do we apply the concept of quality in the digital realm? Importantly, how can we ensure that the software responsible for delivering the digital experiences that we take for granted in the connected society is up to the task?

According to a recently published report by the Consortium for Information and Software Quality (CISQ), poor quality software cumulatively cost U.S. companies over $ 2 trillion in 2020. The report, cited by CIO Dive, said that these costs could be traced back to “unsuccessful IT and software projects, poor quality in legacy systems and operational software failures,” while an undetected software flaw can be the cause of critical system failures and outages.

If you want actual proof of that pain point, the global outage on June 8 that took out some very high-profile digital logos was caused by an undiscovered software bug that was triggered by ONE customer of edge platform provider Fastly pushing a valid configuration change that caused 85% of the network to return errors. Granted, 95% of the network was “working normally” within 49 minutes, but the apologetic blog post that explained why this happened tells its own story.

So, software quality not only matters, but can also play a critical role in ensuring that vulnerabilities don’t see your company logo splashed all over the morning news headlines. With that in mind, let’s take a deeper dive into why integrating software quality metrics into your development process is a prudent path to follow.

Why Measure Problems and Defects?

Software metrics give stakeholders a quantitative basis for planning and forecasting the software development process. In other words, the quality of the software can be easily monitored and improved. In fact, there is a consensus that attention to quality helps to increase productivity and fosters a culture of continuous improvement.

The basis of measuring software and associated processes is to collect data, which helps us better control the progress, cost, and quality of software products. An important element to remember is that you can have objects that can be directly measured, such as baseline dimensions (which can be counted and measured consistently) and others like defects, effort and time (which can run to a schedule).

In this way, continuous measurement provides the following data:

- Quantitatively expressing requirements, goals, and acceptance criteria.

- Monitoring progress and anticipating problems.

- Quantifying trade-offs used in allocating resources.

- Predicting the software attributes for schedule, cost, and quality.

To put it more simply, by measuring problems and defects, we obtain data that may be used to control software products. These can be split into five defined metrics: Reliability, Usability, Security, Cost and Schedule, Efficiency.

These metrics can be further explored in the sections below:

Reliability

The reliability of the software is the possibility that the software will run error-free in a certain time in a certain environment.

Unsurprisingly, software reliability is also an important factor in system reliability. Software is different from equipment reliability because it reflects excellent design rather than craftsmanship. The caveat is that the high complexity of software is the main factor that causes software reliability problems.

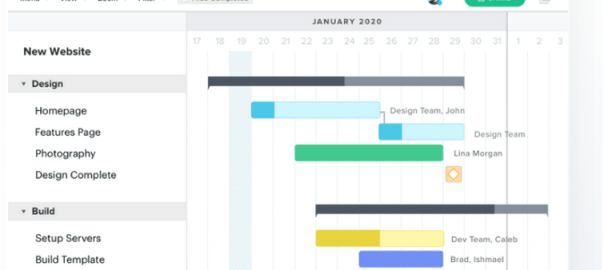

Figure 1. Reliability Growth Model for Quality Management Source : Reliability Growth Models

Although researchers have created a model (shown above) that combines the two, the reliability of the software has nothing to do with time. In actuality, software reliability modeling technology is a priority for many companies but has not yet gained widespread adoption.

In terms of available technology, we need to carefully choose the appropriate model that best suits our situation. In many ways, measurement software is still in its infancy.

There is no good quantitative method to prove the reliability of the software without undue restrictions. Various methods can be used to improve reliability, but it is difficult to strike a balance between development time or budget and the reliability of the software itself – CISQ’s report, for instance, said that a lot of IT projects that were developed during the pandemic contributed to an increase in software failures because they were reactionary as opposed to being well-thought out.

Usability

The usability level focuses on how users learn and use the product to achieve their goals. This level also applies to the end user’s satisfaction with the process.

To collect this information, software professionals use a variety of methods to collect user feedback on either existing websites or any plans related to a new web location.

An easy-to-use evaluation can include two types of data: qualitative data and quantitative data. The former describes the thoughts and opinions of participants in the evaluation, while the latter shows what happens.

Once collected, this data can be used to:

- Evaluate the usability of the website.

- Recommend improvement.

- Implement recommendations.

- Retest your website to measure the effectiveness of the changes.

Security

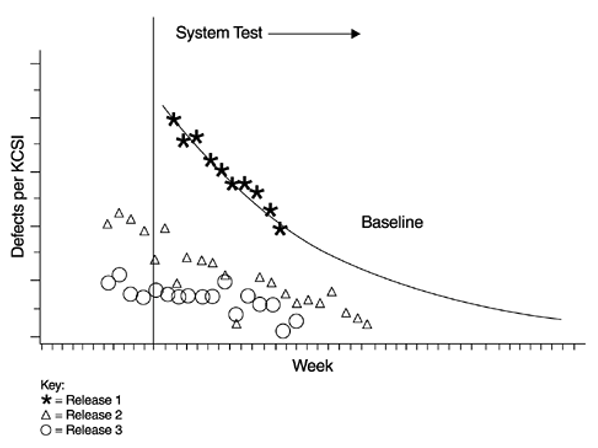

Software security is the idea of developing software that can still function normally in the face of malicious attacks. The average cost of a data breach, according to a cited 2020 report from IBM, is around $ 3.9 million, so security is a key factor.

Software security aims to avoid security vulnerabilities by providing security early in the software development life cycle. Essentially, security is risk management.

Figure 2: Three Pillars of Software Security Source : Software Security

And while there is an assumption that all software developers would have this requirement at the top of their to-do list, Security metrics can help you track:

- Percentage of infiltrated applications

- Percentage of bugs fixed

- Cost of repairs due to software security flaws

- Cost of application security resources

- Number of applications tested

- Number of applications that meet or exceed compliance requirements

- The number of code defects that have reached the production environment

- The number of critical applications that require in-depth testing.

- Time to build secure applications.

- Time to test each application

Once you have a handle on the security side of the software in development, then you can factor in metrics that relate to time-to-market, the cost of unforeseen vulnerabilities and a plethora of other variables that can impact launch.

Cost and Schedule

Rework is an important cost factor in software development and maintenance. As we all know, the number of product-related issues and defects directly affects these costs.

However, measuring problems and errors can help us not only understand where and how problems and errors occur, but also how to identify, prevent and predict costs. In addition, this metric means we can keep these costs under control.

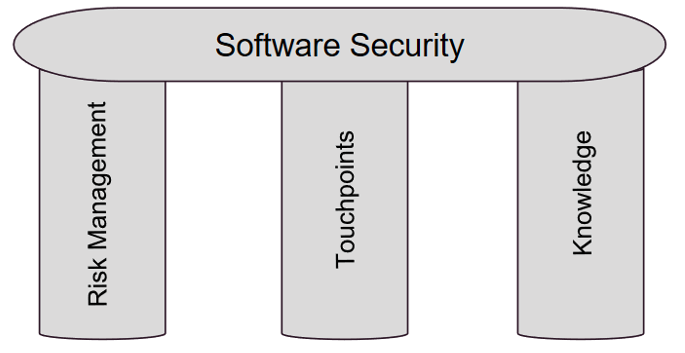

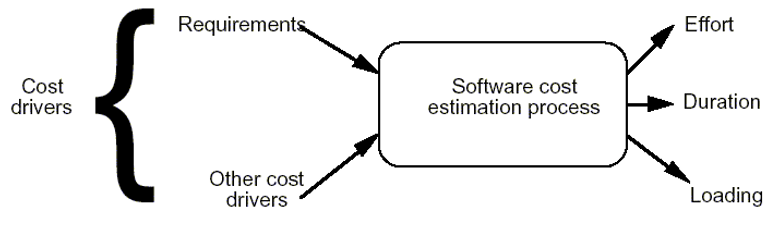

Figure 3: Software Cost Estimation Process Drivers & Outputs Source: Software Cost Estimation

These operations are important for determining and justifying the funding required, determining whether specific recommendations are appropriate and ensuring that the software development plan is consistent with the overall system plan. This also plays a part in working out the number of staff assigned to a project and the development of an appropriate plan basis and timetable for effective and meaningful plan management.

Although workload, people, and processes are the main drivers of advancement, we can use problem and error metrics to track project progress, identify process inefficiencies and predict obstacles that might endanger deadlines.

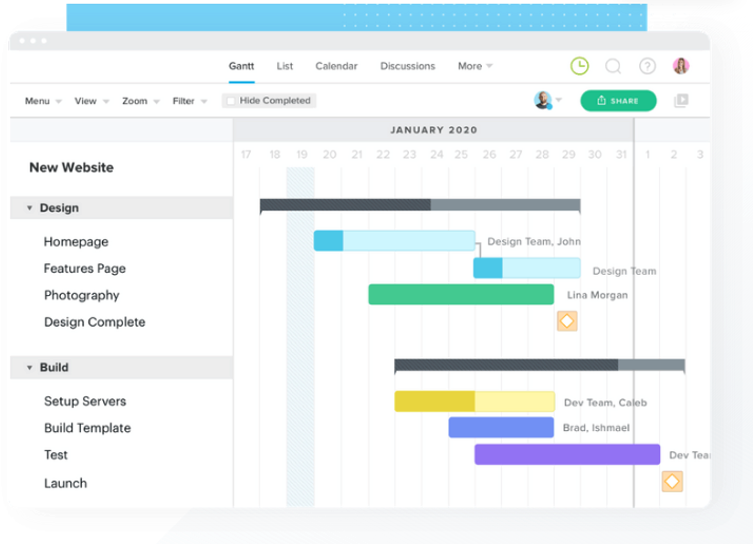

The visual below gives us an idea of how we can set these metrics up:

Figure 4: Software Scheduling Software Source: TeamGantt

The planned measurement tracks the contractor’s performance against commitments, deadlines, and milestones. Milestone KPIs provide you with graphical representations (graphs and charts) of programming activities and planned schedules.

Please note that when signing a contract for software development, entry and exit criteria must be reached for each event or activity. One of the limitations of explaining plan indicators to a customer is to remember that many actions happen at the same time. With that in mind, look for problems in the process and do not sacrifice the quality of the schedule.

Efficiency

This metric relates to the amount of software being developed or average demand divided by the number of resources used (such as time, workload, etc.).

And you don’t need to be a rocket scientist to know that different teams and engineers have different performance indicators. That makes efficiency even more vital, so it is probably a good idea to make these align. An efficient outlook would see the teams have the same task at the same time, with each task checked several times by the teams to ensure that quality is being maintained throughout.

We can demonstrate how efficiency metrics can be of use in software development by considering the following as suitable:

- Meeting Times: team leaders or scrum masters should inspect the committed meeting time and the actual meeting time.

- Lead Time: the amount of time between the birth to the end of a process. This depends on both team quality and project complexity, both of which directly affect the project cost.

- Code Churn: the time the developers spend editing, adding, or deleting their own code.

- MTTR and MTBF: stands for Mean Time to Repair and Mean Time to Failure.

- Impact: a measure of how the code is affected by the changes made in the code.

Metrics Can Be Invaluable

As we have noted above, the quality of any software development is a key part of determining the success or failure of that software. Yes, nobody wants their software to fail, and it is a safe bet that said failure is going to be looked at in an unfavorable light by the end users of that code.

Ultimately, the nature of software development is complex, so it makes sense that we need complex metrics to help us understand the effects of our changes and the inner workings of systems, especially as they get larger and more complex. Once we have the concept of quality baked into everything we do, then it follows that we will be able to deliver what the customer wants. Metrics may not be the sexiest part of the process, but they are a great way of making sure that everything goes to plan.

Business & Finance Articles on Business 2 Community

(81)