When Google introduced RankBrain into its ranking algorithm, many wondered how that might impact SEO. Columnist Larry Kim theorizes that it has placed greater importance on user signals.

The way Google ranks pages in its search results today is much different from the way it ranked them two years ago.

Why? In early 2015, Google began its slow rollout of RankBrain, a machine-learning artificial intelligence system that helps process search results as part of Google’s ranking algorithm. As of June 2016, RankBrain is being used for all Google queries.

But how does machine learning impact rankings exactly? That’s the big question.

SEO used to be all about building links and using the right keywords. Links and keywords still matter, but machine learning has transformed the traditional SEO ranking model into something new.

Let me explain.

The new SEO ranking model

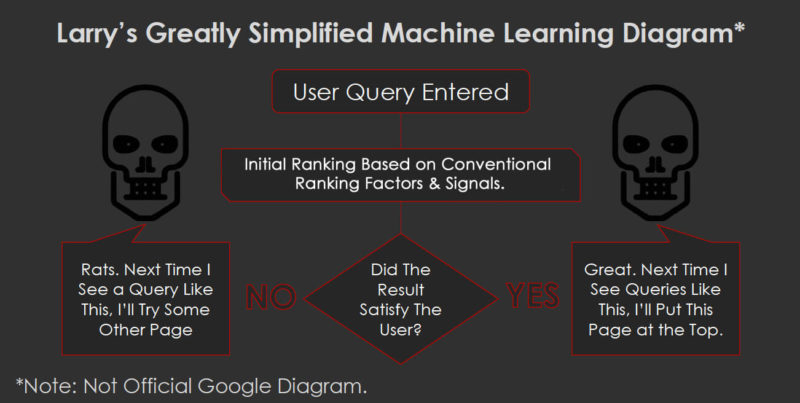

What is this new model? No one knows for sure, but here’s my theory:

A searcher enters their query. Google returns a set of relevant organic search results that are largely based on conventional ranking factors. Machine learning then becomes a “layer” on top of this. It becomes the final arbiter of rank — quality control, if you will.

It’s like Google is saying, “Great, I’ve successfully crawled and indexed this page. The page exists on a strong domain (it has a high level of expertise, authoritativeness and trust). The content is optimized, understandable, relevant and matches the searcher intent. BUT do any humans click on the result and engage with it?”

This last sentence is the key.

“Perfect SEO” is pretty imperfect if you’ve created content that ranks on search engines but doesn’t get any clicks.

It doesn’t matter how many links you have pointing at your page or if it’s optimized with all the right keywords — if the engagement is too low, then you’re out.

Of course, you won’t be out immediately. Google will continue auditioning your page for relevant queries… for a time. But if it fails to attract engagement, it will continue to die a slow death. It could lose 3 percent of traffic per month — so small you don’t even notice it until it’s too late. Eventually, your page will simply fall out of ranking contention.

Dwell time will be the death of your low-quality pages

The relationship between time on page and organic search traffic is changing. Something algorithmic is happening.

Can we see it? Yes! Let’s walk through some data.

In “Does Dwell Time Really Matter for SEO? [Data],” I explained how to find your most vulnerable content — pages that are likely to lose organic traffic because they have low engagement. We did this by looking at time on page because it is proportional to dwell time, a metric only Google can measure.

To quickly review, before RankBrain rolled out, you may have had pages that ranked well but really didn’t deserve to. Even though they had low engagement, it didn’t hurt your organic traffic in a noticeable way.

Here’s an example. Look at the average time on page for some of these high ranking pages:

But after RankBrain, that all changed. Now look at the top pages. They all have excellent engagement metrics:

A lot of people think the relationship between dwell time and SEO is tenuous. However, after seeing this data, it clearly seems like there is some sort of natural correlation.

Now let’s go further.

Breaking down a donkey

This is one of the “donkey” pages — i.e., pages that don’t really deserve to rank well — that got killed by RankBrain:

Wow, this is crazy! Look what has happened.

Organic traffic has declined by 65.5 percent in the past 16 months! Holy moley.

This is how machine learning works. It doesn’t take all your traffic at once. It takes it a small percent at a time! That makes it hard to track — unless you know what you’re looking for.

This page used to rank for probably 1,000 different queries. Google has likely been auditioning this for lots of different long-tail variations — and it hasn’t been producing well in terms of engagement.

What I think is happening is that this page is getting knocked out of contention for ranking on different types of queries every month, bit by bit.

Could other factors be at play? Of course — Google’s algorithm is a complex beast, plus new content is being created all the time (by you and your competitors). But traffic is consistently dying just a little every month.

Simplifying the new SEO ranking model with a sports analogy

The National Football League has 17 weeks of regular season games to separate the unicorns (winners) from the donkeys (losers). That is followed by a series of playoff games, culminating with the Super Bowl — the winner of which is declared the top team.

This year, 12 teams made it to the NFL playoffs. The New England Patriots and the Atlanta Falcons both dominated this season, but only one team (the Patriots) won the Super Bowl.

In Google’s ranking model, Google uses hundreds of conventional SEO ranking factors and signals to determine which pages are most relevant for different queries. After this initial ranking, Google does its own version of playoffs, picking winners based on user engagement.

Out of 10 organic results, only one can win the top position in Google’s SERPs.

Conventional SEO ranking factors (e.g., relevant content, keyword alignment, links to your domain, domain strength) determine how the SEO “regular season” plays out.

If your traditional SEO isn’t good enough, you won’t make the playoffs. Your stuff will rank on Page 5 or 10 (or worse!) of Google — not that it really matters because few people bother to visit those pages.

Pages that score the most SEO touchdowns will perform well. Only a few pages will get into the “postseason.”

In the NFL playoffs, defense wins championships. But in the SEO playoffs, it’s all about the user engagement metrics: click-through rate and dwell time.

This is where machine learning really separates the unicorns from the donkeys. All your efforts are wasted if you lose.

Conclusion

Clearly, you want to have as many pages as possible with excellent, unicorn-level engagement metrics. These will be your most valuable pages — and should pass Google’s machine test.

You also want to find your most at-risk content because if you don’t meet the minimum engagement, you’ll be out.

Again, this is just my theory at this point, based on some pretty compelling examples — but nothing involving Google’s algorithms can be 100 percent conclusive.

Try it out yourself. Run the reports, and let me know what you’re seeing!

[Article on Search Engine Land.]

Some opinions expressed in this article may be those of a guest author and not necessarily Marketing Land. Staff authors are listed here.

Marketing Land – Internet Marketing News, Strategies & Tips

(71)