— November 25, 2017

If you ask most marketers, they will tell you that A/B testing and personalization are two completely different things. I respectfully disagree, and I think this disagreement is at the root of how to use them best together.

We as growth/performance marketers are accountable for results, often measured with conversions, new customers, or revenue. Every marketer wants higher conversion rates, and A/B testing and personalization are simply two ways to drive more conversions.

Still, most marketers treat testing and personalization as independent efforts when, in fact, they are best applied together. When you understand this, you can get so much more out of your conversion rate optimization efforts.

Why and when you should use A/B testing and personalization

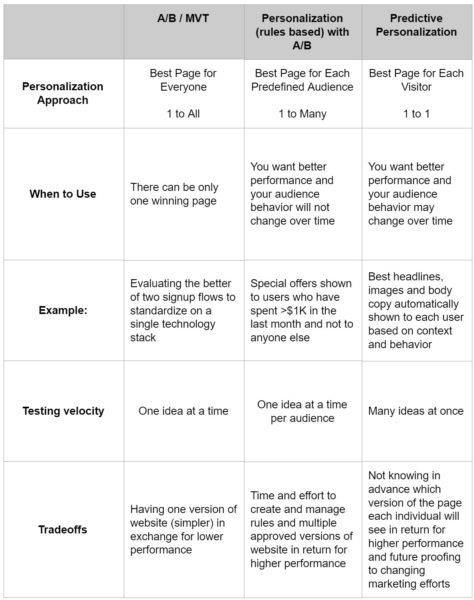

A/B testing and multivariate testing (MVT) are commonly used to find the single best performing page (or a set of pages) to show all users. This approach makes sense when you can show only one version of your site to visitors.

For example, these testing techniques are useful if you want to decide between two sign up flows, each built on a separate technology stack, and want to select the best flow on one technology stack going forward.

Personalization is about tailoring the experience

A/B testing is effective at testing one idea versus another and finding which is better on average.

Personalization allows you to serve different versions of your site tailored to the different contexts and interests of your audience. Having more than one version of your site allows you to “de-average” your message and deliver better-performing variations to each visitor. Despite this, Clearhead found last year that only 17% of online retailers have a path to develop personalized experiences for customers, and similar stories can be found in other industry verticals.

Many marketers are already personalizing their site without fully realizing it. For example, if you have separate landing pages for different audiences or campaigns, you are personalizing your site.

When correctly applied, personalization will always deliver better results than a single page shown to all users.

Why? Delivering the best performing experience for each audience segment delivers better results than serving the best average performer of all. This table illustrates how this works:

But, you may ask, “How do I know which variation of my site to serve to which of my audience segments?” The answer is to do both personalization and A/B testing together.

You can get the best of both by showing each predefined segment the right experience for them and knowing that the experience you’re showing is the right experience for them.

How to Combine A/B Testing and Personalization for Better Results

Rather than being completely separate, A/B testing and personalization go together like chocolate and peanut butter. You should have a testing mentality when you do personalization, which is a mind-shift from where I see most marketers today.

You can test potential personalizations using an A/B testing tool by setting up an audience for each segment and then A/B testing within that audience.

To do this, first predefine each target segment based on data you have about them. Then create an audience that matches this definition. Then create an A/B test for each segment.

For each A/B test, assign the corresponding audience that ensures it’s only shown to that segment. You will then find the best page (A or B) to show this segment. Most popular A/B testing tools have this ability.

Rule-based personalization

You can also set this up within a rules-based personalization tool by running an A/B test within a segment. To do this, you set up a series of rules to define targeting for a specific segment and/or context. Then activate an A/B test within each rule. This test will only be shown to the visitors who are eligible to see this rule.

Most popular personalization tools offer A/B testing within a rule so that you can find the best page (A or B) to show each segment.

In both approaches, you will find the single best experience to show each segment, driving better performance than showing the best average performing experience to everyone. The practical tradeoff versus A/B testing is the time and effort required to create more versions of your site (and get them approved) in return for higher conversion rates.

Combining Testing and Personalization at Scale

The never-satisfied, always-wanting-to-do-better among us should then ask “is there a scalable way to do both together even faster?” The answer is yes, and the approach is called predictive personalization.

Effectively, all of the personalization we read about today is rule-based, meaning that every personalization requires US marketers to set up a rule saying, “For visitors that fit this profile and behave in this way, show them this experience.”

Predictive personalization adds automation to this approach.

Imagine a machine automatically discovering segments in real time, testing many ideas simultaneously, and showing each individual visitor the tailored experience most likely to cause them to convert at that moment.

This approach has two meaningful implications: first, as you change your marketing efforts, the right experience to show each visitor may very well change over time. With A/B testing, we’re all vulnerable to this realistic risk, and without frequent re-testing, it would be difficult to even know it’s happening.

With predictive personalization, a machine keeps observing and adjusting to the then-current optimal experience. We’re future-proofed from our and our competitors’ changes to our marketing efforts.

The follow-on implication is that tests don’t end. Tests yield results with statistical significance, along with insights we can act on across our marketing efforts. We leave the tests running in case the right answer changes. That is also a mind-shift from where I see most marketers today.

The practical tradeoff compared to rules-based personalization is not knowing in advance which version of the site an individual visitor is going to see (from among the versions you’ve already blessed) in return for even higher performance and testing many ideas at once.

You can get the best from both A/B testing and personalization at the same time while off-loading a lot of the rote tasks of optimization to a machine. You can spend more time understanding your prospects and ideating new approaches to getting prospects to convert.

So which technique do you use when? I suggest:

An Example of A/B Testing and Personalization in Action: Chime

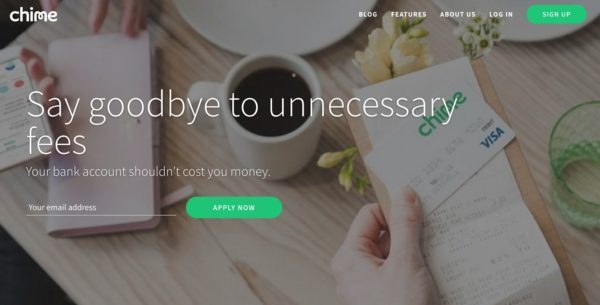

Chime is an online bank designed to help members achieve financial wellness by eliminating unnecessary fees. They offer a mobile app that keeps members in control of spending and helps members form a healthy savings habit through automation. Chime’s lean, experienced, and data-driven marketing team wanted to drive more new customer sign-ups.

They began by focusing on a few experiences on the page using predictive personalization. Within a week of their initial brainstorm, they’d created eight headlines, two new pieces of body content, and a new video to test on their homepage which sees thousands of page views per day. Together, these ideas meant Chime was testing 54 different versions of their homepage.

Chime’s initial predictive personalization tests

Examples of initial headline tests.

An overview video from Chime CEO and co-founder, Chris Britt.

New body copy and images.

Chime’s Initial Results

After six weeks, they saw an 8% lift. How? Each visitor was automatically slotted into one of the millions of possible segments based on what the predictive personalization system knew about that visitor.

By watching visitor behavior, the system learned which combinations of a headline, body copy, and video performed best for each segment. Within hours, the system began showing the higher-performing combinations more often.

Second Round of Variations

While 8% lift was good for the business, the team wanted more and doubled down on what worked.

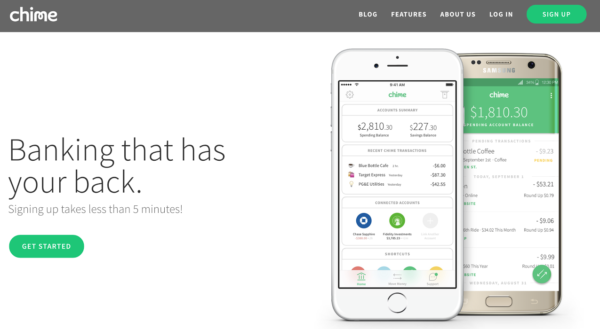

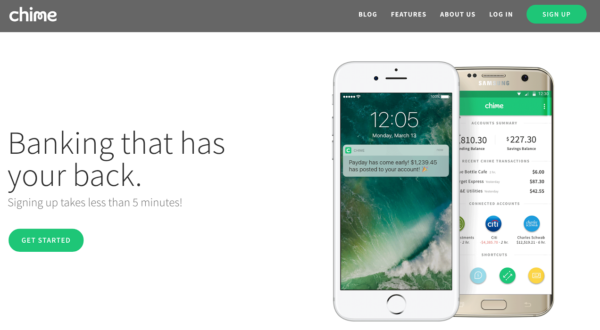

They tried new hero photography, another video, and new body copy based on what they had seen resonating with most visitors to date. These ideas brought their homepage test up to 216 versions of the page.

Product-centric images showing the Chime app in the hero module.

A second video focused on automatic savings.

Different images and body copy.

Four weeks later, Chime experienced 79% lift in new customer acquisition through their homepage when compared to a holdout group that saw the base Chime website permanently.

Learnings from Testing with Predictive Personalization

In addition to the performance gains, Chime’s approach to combining testing and personalization yielded them some valuable insights into their customers’ preferences.

For example, they discovered that device type, time of day, and geography are all important personalization dimensions for their visitors.

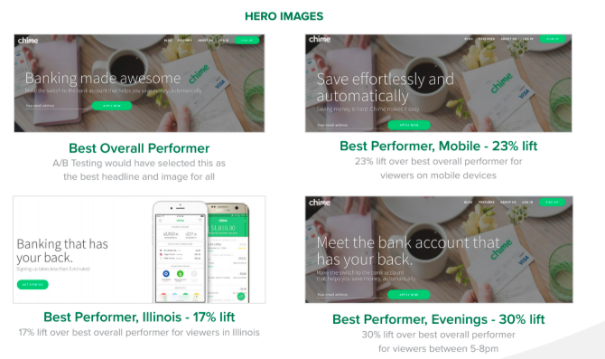

The example below shows the best overall headline and image combination, “Banking made awesome.” This is the single page all users would have seen if Chime had run a series of A/B tests.

However, predictive personalization yielded them 23% more lift for users on mobile devices by identifying that the on-site message “Save effortlessly and automatically” performed better for these users.

Likewise, Chime was able to generate 17% more lift by personalizing by geography, and 30% more lift by tailoring their headline based on time of day.

Segmenting test results has yielded fruit for others before, and predictive personalization tools automate this process.

Chime focused first on building momentum by testing a few ideas early. They learned what worked and then doubled down on high performing ideas, driving material lift quickly.

In this case, A/B testing without personalization would have taken a lot longer and left a lot of money on the table.

A Second Example of A/B Testing and Personalization in Action: Perkville

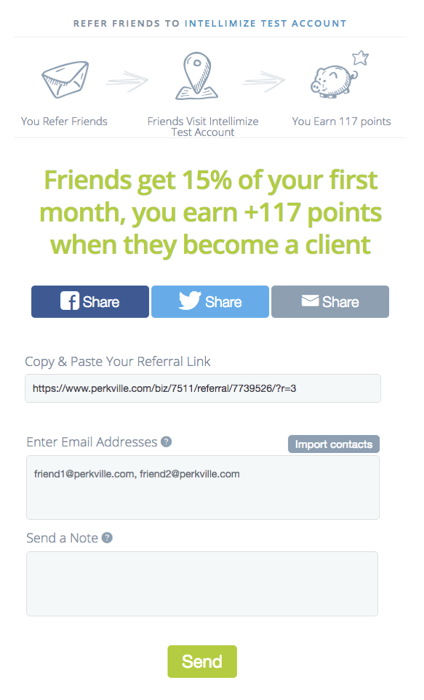

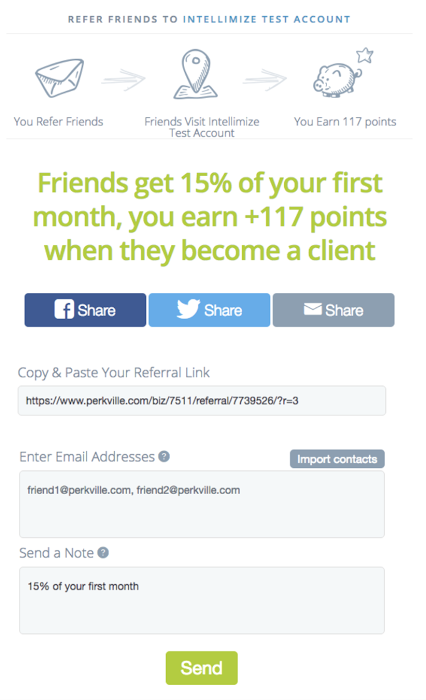

Perkville is an all-in-one referral and rewards program that helps businesses drive customer loyalty and grow revenue. The company wanted to increase the volume of customer referrals for its clients’ businesses. They decided to focus first on optimizing their referrals page which includes a form for members who want to refer friends to complete.

Perkville’s Base Member Referral Form (No Changes).

The relatively low daily page view volume on the send referral page (<2K per day) made conventional A/B testing difficult. It would routinely take weeks or months to test a single new idea and deliver statistically significant results. As a result, Perkville tried combining A/B testing and personalization with predictive personalization as a way to test more ideas and drive more referral signups from their site.

Initially, the company focused on three experiences on their referrals page for testing:

1. Personalizing the call to action

2. Simplifying the form (testing headlines and other changes)

3. Changing the appearance and order of social links

Examples of Tests

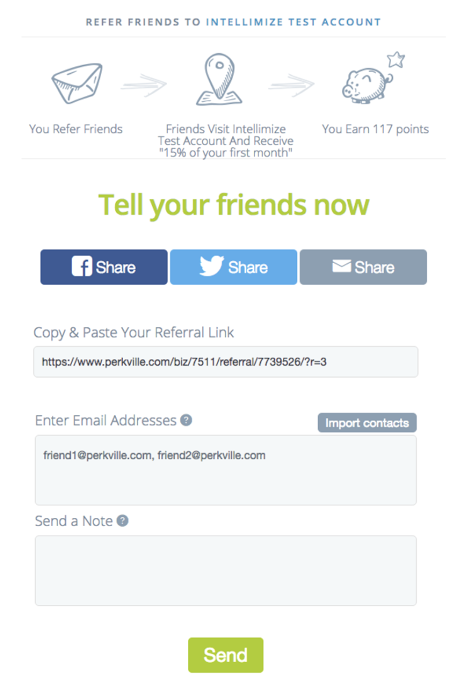

Perkville tested changes to their existing form including pre-populating the “send a note” field which allows the referrer to include a personal note to the recipient. They also tested simplifying the headlines and information at the top of the form.

Re-populate “Send a note” with offer.

Simplify headline.

Perkville also tested a number of variations of the call to action text in the “Send” button at the bottom of the form including “Share the love”, “Tell your friends”, and “Share and earn x points if they accept.

Results

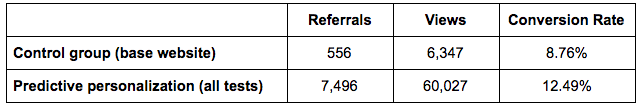

Five weeks after starting the initial tests, Perkville saw the following results from their predictive personalization tests.

Overall results:

Improvement: 42.5%

Statistical Significance: >99%

Call to action results:

- Best performer overall: “Share the love”

- Best performer (desktop devices): “Tell your friends”

- Best performer (tablet devices): “Share and earn 117 points if they accept”

If Perkville had used A/B testing without personalization, they would have served the “Share The Love” call to action to all users. Instead, by combining A/B testing with personalization through predictive personalization, Perkville automatically optimized the call to action for each visitor, delivering more conversions. Perkville found that the best performing call to action differed by device type, just as Peep suggested is likely to happen when doing A/B testing on its own.

These automated optimizations combined A/B testing and personalization to deliver better results overall while also yielding useful insights about which variations performed best.

Conclusion

Not all financial services and SaaS companies are able to do what Chime and Perkville did. For those that can only have a single version of a page up at a time, A/B testing without personalization may very well be the right approach to driving incremental conversions. However, combining your A/B testing and personalization efforts generally yields better results, so I believe it is worth considering as you try to drive better conversion rates for your company.

A/B testing and personalization are often viewed as completely separate efforts. I hope I’ve persuaded you that they are really both conversion optimization tools that are best used together to maximize results for your business today.

Digital & Social Articles on Business 2 Community

(149)